Holes in Bayesian Statistics

1.论文信息

作者: Andrew Gelman, Yuling Yao

链接: https://arxiv.org/abs/2002.06467

看这篇论文的缘起是刷arXiv每日学术速递专栏时扫到了Bayesian字眼突然有了兴致,然后再看第一作者是Andrew Gelman,这可是贝叶斯数据分析的泰斗,他写的Bayesian Data Analysis是经典教材,现在已经出到第三版了。现在这大佬要讨论贝叶斯统计的漏洞,这是要自己颠覆自己的节奏吗!值得一提的是这篇论文是发表在Journal of Physics G期刊上的,不禁感到好奇和惊叹

再看第二作者名字应该是个华人,于是顺藤摸瓜找到了这位作者的主页,是哥伦比亚大学统计系PhD第六年学生。让我颇受启发的是他的这篇博客Career development,提到他求职的经历与建议,这么优秀的人都难免会有些磕碰,有句话印象深刻

But the cynical part of me, which gets amplified after all these, has the following cynical advice to future job seeker.

大有“悟已往之不谏,知来者犹可追”的意思

这博客里面还提到一条经历:

I am not extremely thrilled with the fact that most tech companies requires no more statistical knowledge than the level of the first three chapters of “regression and other story”–not saying it is not a good book. But I guess a MD would be depressed too if they were only examined by their knowledge on babysitting skills.

感觉我自己也需要读一下RAOS的前三章,点到为止,不求太深。

说到贝叶斯统计有什么缺点,stackexchange有篇高赞回答提到有:1

1)Choice of prior

2)It’s computationally intensive

3)Posterior distributions are somewhat more difficult to incorporate into a meta-analysis

有个课件也分析了贝叶斯统计的优缺点2

Pros of Bayesian Statistics:

1)combine prior info with data

2)provide exact estimate w/o reliance on large sample size

3)can directly estimate any functions of parameters or any quantities of interest

4)obey the likelihood principle

5)provide interpretable answers(credible intervals have more intuitive meanings)

6)can be applied to a wide range of models, e.g.hierarchical models, missing data…

Cons of Bayesian Statistics:

1)prior selection

2)posterior may be heavily influenced by the priors (informative prior+ small data size)

3)high computational cost

4)simulation provide slightly different answers

5)no guarantee of Markov Chain MoteCarlo(MCMC) convergence

围观这篇论文的相关评论也是挺有意思的,可以学到不少说服和反驳的技巧以及开场白的说法,比如

“Long time follower of this blog, but never commented before”3

评论中不乏针锋相对,要在多轮对话中真知灼见慢慢浮现出来,而且表达反对也会是谦虚的口吻,像

“Am I just not understanding?”4

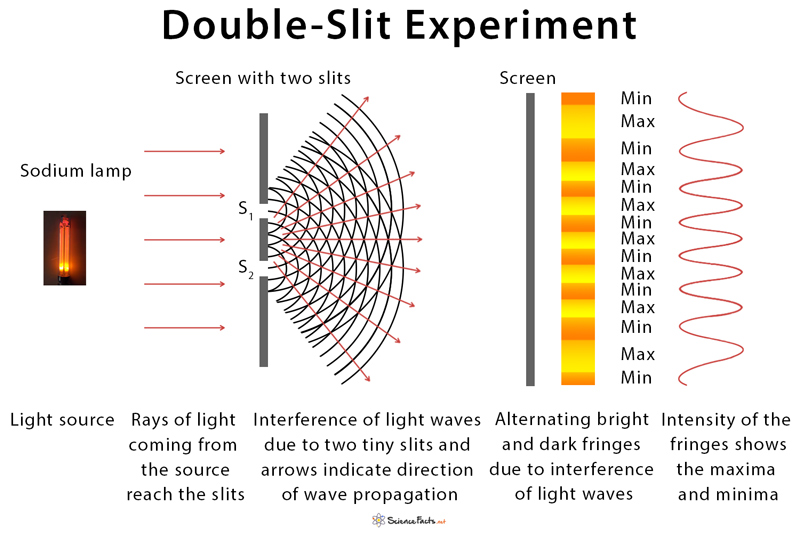

有些争论集中在贝叶斯统计与量子力学的关系,Andrew Gelman以前有多次讨论过double slit experiment5,也就是大名鼎鼎的双缝实验

从中也能感受到“国外是真真切切存在着通过博客的学术交流”,阅读论文相关评论可以提高思辨与科研思维能力,做到有理有据,不迷信权威。

2.论文摘要

Abstract

Here are a few holes in Bayesian data analysis:

1) the usual rules of conditional probability fail in the quantum realm

2) flat or weak priors lead to terrible inferences about things we care about,

3) subjective priors areincoherent,

4) Bayesian decision picks the wrong model,

5) Bayes factors fail in the presence of flat or weak priors,

6) for Cantorian reasons we need to check our models, but this destroys the coherence of Bayesian inference

3.正文

Latent variables don’t always exist (quantum physics)

There are two difficulties here.

The first is that Bayesian statistics is always presented in terms of a joint distribution of all parameters, latent variables, and observables; but in quantum mechanics there is no joint distribution or hidden-variable interpretation. Quantum superposition is not merely probabilistic (Bayesian) uncertainty—or, if it is, we require a more complex probability theory that allows for quantum entanglement.

The second challenge that the uncertainty principle poses for Bayesian statistics is that when we apply probability theory to analyze macroscopic data such as experimental measurements in the biological and physical sciences or surveys, experiments, and observational studies in the social sciences, we routinely assume a joint distribution and we routinely treat the act of measurement as a direct application of conditional probability. If classical probability theory needs to be generalizedto apply to quantum mechanics, then it makes us wonder if it should be generalized for applicationsin political science, economics, psychometrics, astronomy, and so forth.

Weak priors yield unreasonably strong inferences (taking posterior probabilities seriously)

Indeed, Bayesian statisticians are often wary of strong priors because they want to “let the data speak.” The trouble is that letting the data speak in this way will result in many erroneously strong statements. Paradoxically,weak priors imply inappropriately strong conclusions in certain dimensions of the posterior, a point which we have pointed out before in more complex multivariate examples; see section 3 of Gelman(1996). Again, our point is not that a high-variance prior for a parameter of interest is necessarily wrong; rather we are emphasizing that using such a prior has implications for the applicability of the model, as it can result in strong posterior claims from what might seem like weak evidence

The incoherence of subjective priors (Bayesian updating)

This has implications for Bayesian practice (openness to checking and revising models) and for Bayesian theory as well: the modeling process cannot be viewed as a closed system. The previous section demonstrated a serious, even potentially fatal, problem with noninformative or weak priors; the present section demonstrates that we cannot, except in some special cases, assume that subjectivity implies logical coherence.

Bayesian decision theory can be tricky (model evaluation)

In this example, the mistake is that the calculation above mixes the continuous probability density and the discrete probability mass function

Failure of Bayes factors (strong dependence on irrelevant aspects of the model)

In the literature on the Bayes factor, solutions to this problem have been proposed, along the lines of setting some conventional prior on parameters within each of the candidate models.But we have never found these arguments convincing—and, to the extent that the are convincing,they run counter to the goal of coherence, by which prior distributions are intended to represent actual populations to be averaged over when evaluating inferences.

Cantor’s corner (model updating)

Cantor’s diagonal argument, taken metaphorically, is that we can never fully anticipate what is needed in future models

our point is that the potentially unlimited nature of Cantorian updating reveals another way in which Bayesian inference cannot hope to be coherent in the sense of being expressible as conditional statements from a single joint distribution.

Implications for statistical practice

there will always be more aspects of reality that have not yet been included in any model. Lest this be seen as an endorsement of some sort of flabby humanism of the“scientists are people too” variety, let us emphasize that the same issues arise with extrapolation and generalization from automatic machine learning algorithms.

4.讨论

There are various reasons to care about holes in Bayesian statistics:

we want to avoid these holes in our applied work, and we want to be aware of alternative approaches, going beyond default noninformative models or naive overconfidence in subjective models.

we should be appropriately wary of statistical inferences produced using any method, Bayesian orotherwise, as the Cantorian argument implies that coherence will never be within reach.

“rationality (both in the common-sense and statistical meanings of the word) is complex. At any given time, different statistical philosophies will be useful in solving different applied problems.As Bayesian researchers, we take this not as a reason to give up in some areas but rather as a motivation to improve our methods: if a non-Bayesian method works well, we want to understand how.”

Bayesian statistics has no holes in theory, only in practice. When the model and all model assumptions are taken for granted, the Bayesian procedure is unique. But we prefer to break such enforced coherence by questioning the validity of the model and assumptions. On the other hand,perhaps these practical holes imply the existence of a theoretical hole, if theoretical statistics indeed is the theory of applied statistics.

5.附录

Things that seem like problems with Bayesian inference but aren’t

Bayes as a pretty but impractical theory

A famous probabilist once wrote that Bayesian methods “can be defended but not applied” (Feller,1950). There is a pragmatist appeal to the idea that Bayesian inference is doomed by its own consistency, but ultimately we find this particular anti-Bayesian argument unconvincing, for two reasons. First, much as changed since 1950, and Bayesian methods can and are applied in many areas of science, engineering, and policy. Second, the consistency of Bayesian inference is only aproblem if you are inflexible.

Bayes as an automatic inference engine

we sometimes hear the reverse criticism, that Bayesian models can be fit too easily and thoughtlessly, in contrast to classical inference, where the properties of statistical models have to be fit laboriously, one at a time. We agree that the relative ease of Bayesian model fitting puts more of a burden on model evaluation—with great power comes great responsibility—but we take this as a criticism of rigid,fixed-model Bayesian inference, not applying to the open version discussed in section 7.

Bayesian inference as subjective and thus nonscientific

We have proposed to replace the words “objective” and “subjective” in statistics discourse with broader collections of attributes, with objectivity replaced by transparency,consensus,impartiality,and correspondence to observable reality, and subjectivity replaced by awareness of multiple perspectives and context dependence(Gelman and Hennig, 2017)